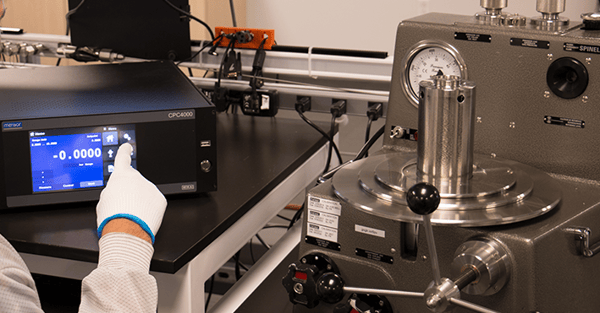

Calibrators and calibrations cost money, and the more accurate the calibrator is, the more expensive it is. So, a very important question when deciding what calibrator to buy is, how accurate does my calibrator need to be? You have most likely heard of the 10:1 or 4:1 ‘rules,' but there is more to it than that.

Test Accuracy Ratio vs. Test Uncertainty Ratio

A couple of definitions first:

Test Accuracy Ratio (TAR) is the accuracy of the calibrator compared to the accuracy of the device being calibrated (i.e. the ratio of the published accuracy specs of the calibrator and the device under test).

Test Uncertainty Ratio (TUR) compares the uncertainty of the device under test (DUT) to the uncertainty of the measurement process. This is a relatively stringent test and takes into account the uncertainty of the entire measurement process, in which the accuracy of the calibrator is only one component.

For many years, a TAR of 10:1 was an industry norm. As time went on, technological advancements led the test equipment and DUTs to become increasingly accurate, even the relatively low cost ones. With that, TARs of 4:1 became a generally acceptable norm. ANSI/NCSL Z540-1 requires a TUR of 4:1. Section 10.2, paragraph B of this standard states: “the collective uncertainty of the measurement standards shall not exceed 25% of the acceptable tolerance for each characteristic of the measuring and test equipment being calibrated or verified.”

The concern is that the closer in accuracy the DUT is to the calibrator, the higher the possibility of false acceptance (the DUT is found to be in spec when it actually isn’t) and false rejects (the DUT is found to be out of spec when it is actually in spec). False accepts are the much larger concern as they can cause a traceability chain of false accepts. In some cases, this leads to subpar products being manufactured in critical industries (food, chemical, aerospace components), which could potentially lead to fatal results.

The root of this problem is uncertainty. If a measurement is close to being out of tolerance, then the uncertainty in the calibration process may be large enough to cause one of these false accept or reject conditions. This becomes more and more of a risk as TURs shrink. ANSI Z540 specifies that the maximum level of false-accept risk be no more than 2%.

Test Uncertainty Ratios and Guard-banding

The solution to this problem is ‘guard-banding.’ This technique looks at how large the calibration process uncertainty is and determines the likeliness that it could potentially cause a false accept. As a simple example, if the DUT specification for uncertainty is 0.1kPa and the DUT reads 0.07kPa, it would appear to be well within specification. But if the calibration process has a measurement uncertainty of 0.04kPa, the total measurement could potentially be as much as 0.11kPa (i.e. the actual measurement plus the measurement uncertainty), and therefore the DUT could actually be out of specification.

Guard-banding is the process of calculating a tolerance window to apply to the measurement to avoid these false accept situations. In this example, we would need our measurements to be less than 0.06kPa even though the specification is 0.1kPa, so that the uncertainty factored in our true measurements would still be less than 0.1kPa. We would be applying a guard-band of 0.06kPa or 60% in this case.

It would be necessary to take into account the TUR and uncertainties in the calibration process to determine the size of the guard-band required to achieve the 2% or less false accept requirement of Z540. This guard-band would look quite different for each type of DUT and the measurement standard it is calibrated with.

The guard-band calculation can assist you in following the 4:1 TUR rule recommended by the Z540-1 and in easily determining the desired accuracy of the calibrator needed to perform calibrations on your DUTs.

Related Reading: