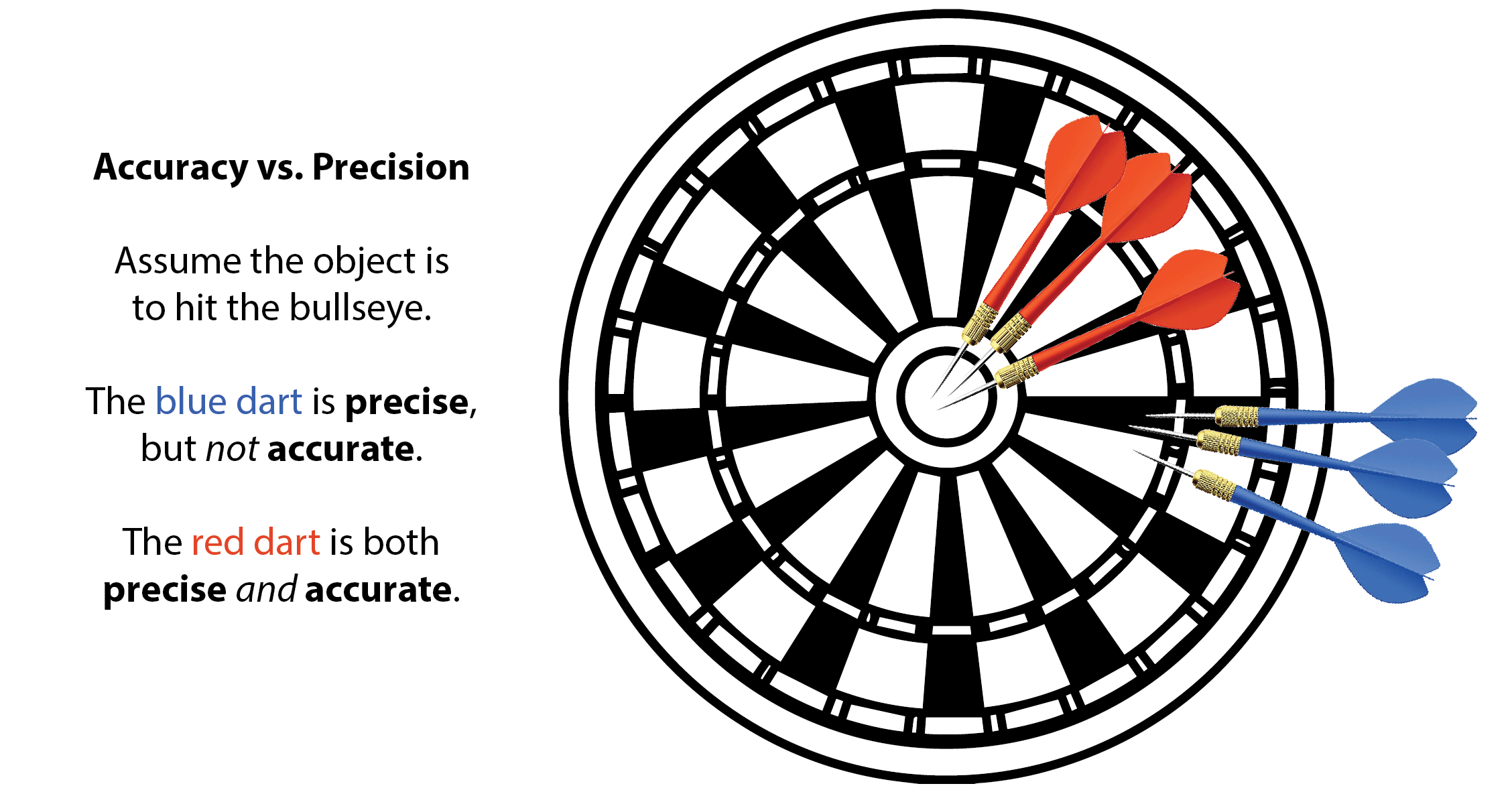

Accuracy is defined by the Oxford Dictionary as 1: the quality or state of being correct or precise, and 2: the degree to which the result of a measurement, calculation or specification conforms to the correct value or standard. In terms of pressure and temperature measurement and calibration, accuracy is how close to perfect a reading is with the lowest amount of uncertainty (error). Compare that with precision, which is the repetition of results - though not necessarily the desired outcome - with a larger level of uncertainty.

Let’s use a game of darts as an example. If your opponent aims for the bullseye and throws their darts, but consistently hits the same mark a few inches to the right, they are precise, but not accurate. You, on the other hand, hit the bullseye every time. Not only is your aim precise, but more importantly, it is accurate.

Why is accuracy important in terms of pressure, temperature and calibration? Results must be as near correct as possible to ensure consistency, and ultimately, safety. In the world of calibration, the accuracy of an instrument is determined by the reference standards used to perform the calibration, the ambient conditions of the laboratory and the process of calibration itself.

Instruments must be properly calibrated in order to ensure the lowest level of uncertainty in the readings. Whether you are working in aerospace or pharmaceuticals, regular and proper calibration of instruments is a reliability and safety issue. Accuracy of readings ensures aircraft will stay at the specified altitude, and medications contain safe amounts of ingredients.

Related Reading: