Accuracy specifications have become more confusing than ever before with various manufacturers detailing technical specifications differently. With technological advancement and increasing complexity in products, it has become challenging to identify each product with a common specification terminology.

Accuracy Measurement Specifications

The two most common terms used to represent accuracy of a measurement and calibration instrument are “% of reading” and “% of full scale.” However, as we see with most products they are not independent of each other!

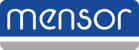

The “% of full scale” represents a static accuracy (a factor of the full scale value) that is fixed throughout the range of the instrument, while “% of reading” represents a dynamic accuracy value that changes throughout the range depending on the current reading of measurement. It is impossible to have a “% of reading” accuracy at very low ranges or at “zero,” as this would theoretically make the accuracy value as zero. This makes representing an instrument with “% of reading” accuracy quite challenging.

Most instrument manufacturers use a combined specification of “% of reading” and “% of full scale” to overcome this problem. Such specifications are lengthy to read and often tricky to understand as it requires the user to do a detailed mathematical calculation of the accuracy at every given point in the range.

Intelliscale Accuracy Measurement Specification

Intelliscale provides an easy solution to this problem by combining the “% of reading” accuracy and “% of full scale” accuracy into a single “% of Intelliscale” accuracy, which is valid throughout the range of the instrument. The representation is denoted as “% of IS-X” where “X” represents the point in the range of the instrument where the accuracy switches between “% of reading” to “% of full scale value.”

In order to understand the Intelliscale accuracy in detail, refer to our “Understanding Intelliscale Accuracy” flyer, a graphical representation of the various Intelliscale accuracies Mensor offers.

Related Reading: